Best AI Governance Platforms Leading the Charge in 2026

98% of organizations have employees using unsanctioned AI tools, yet only 36% have a formal governance framework in place. This guide evaluates eight platforms on what actually matters: whether they enforce policy at runtime or just generate alerts for humans to act on.

The gap between AI policy and AI control is widening. An estimated 98% of organizations have employees using unsanctioned applications, including AI tools. Only 36% have adopted a formal AI governance framework. And 60% of organizations cannot even identify unapproved AI tools in their environments, meaning they cannot govern what they cannot see.

Regulators are not waiting for companies to catch up. The EU AI Act's High-Risk System rules take effect August 2, 2026, with penalties reaching €35 million or 7% of global turnover. According to a BSA | The Software Alliance analysis, 113 AI-related bills were enacted into law across US states in 2024. Yet according to a March 2026 European Parliament research report, only 8 of 27 EU member states have designated enforcement authorities.

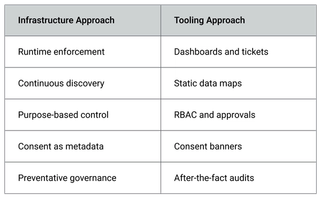

This is the governance gap: AI adoption is accelerating at enterprise speed, but the infrastructure to govern it has not kept pace. A credible AI governance platform closes that gap. Not with dashboards and checklists, but by embedding policy enforcement into the systems where data is accessed, processed, and consumed by AI models.

This guide evaluates eight platforms on what matters most: enforcement depth, data-layer coverage, regulatory alignment, and whether the platform can prove compliance under pressure. We also examine what separates governance infrastructure from governance workflow, and why that distinction determines long-term ROI.

Key takeaways

- AI governance spending will reach $492 million in 2026 and cross $1 billion by 2030, driven by the EU AI Act and expanding global regulation.

- The critical evaluation criterion is enforcement depth: does the platform enforce policy at runtime, or does it generate alerts for humans to act on?

- AI governance requires a data governance foundation. Platforms that govern models without governing the data feeding those models leave a structural gap.

- Eight platforms reviewed across enforcement mechanism, regulatory coverage, data-layer depth, and integration fit.

Why Your Business Needs an AI Governance Platform

Three realities are converging right now, and they leave little room for a wait-and-see approach.

Regulators are enforcing, not just legislating: The EU AI Act's prohibited-practice penalties have been active since February 2025, with high-risk obligations landing in August 2026. But this is not just a European concern.

The Colorado AI Act takes effect in 2026, NYC Local Law 144 is already enforced, and the NAIC Model Bulletin on AI in insurance has been adopted by 24 US states.

For any organization deploying AI across multiple jurisdictions, compliance is no longer a single-framework exercise. It is a layered obligation that compounds with every new market you operate in.

Boards are asking hard questions: According to PwC's 2024 Annual Corporate Directors Survey, 57% of corporate boards now hold primary oversight of AI initiatives. That shift signals something important: AI is no longer delegated to engineering leadership alone. It sits on the board agenda alongside financial risk and regulatory exposure.

IDC projects $1 trillion in AI-driven productivity gains by 2026, but a DataRobot survey found that among organizations that experienced negative impacts from AI bias, 62% lost revenue as a direct consequence. The upside and the risk sit in the same room, and boards want to know which one their organization is managing for.

Ungoverned AI is unmanageable liability: Workday faces active litigation over AI-driven hiring discrimination. Google paid $170 million for COPPA violations tied to algorithmic data use. A Lehigh University study found a 28% disparity in how LLMs flag mortgage applications by demographic group.

These are not hypothetical scenarios. They are active cases with real financial exposure, and each one traces back to the same root cause: AI systems operating without enforceable governance controls. Without that infrastructure, every AI deployment is a liability you cannot audit.

The market reflects this urgency. Marketsandmarket forecasts AI governance spending will reach $492 million in 2026 and cross $1 billion by 2030, growing at a 45.3% CAGR. The question is not whether to invest. It is what kind of governance infrastructure to build.

Top AI Governance Platforms for Compliance, Control, and Auditability

The platforms below were evaluated on five criteria: depth of AI-specific governance capabilities, enforcement mechanism (runtime vs. post-hoc), data-layer coverage, regulatory framework alignment, and integration depth with enterprise infrastructure.

1. Ethyca

Founded in 2018 and headquartered in New York, Ethyca is a data infrastructure platform that embeds governance, consent, and policy enforcement directly into enterprise data systems. Backed by $13.5M in Series A funding, the company takes a fundamentally different approach to governance: rather than layering it on top of existing workflows, Ethyca builds it into the systems where data is accessed, processed, and consumed by AI models.

Key capabilities: The platform is built on Fides, the most-used open-source privacy engineering toolkit, and spans five modular products that cover the full governance chain:

- Helios: continuous data inventory and classification across all connected systems

- Janus: consent orchestration that tracks and enforces user preferences at scale

- Lethe: automated data subject request fulfillment and de-identification

- Astralis: AI-specific policy enforcement at the point of data use

- Fides: the underlying governance taxonomy that unifies all four products under a single, machine-readable policy layer

Most governance platforms generate alerts when a policy violation is detected and rely on humans to act. Astralis enforces policy at runtime, before non-compliant data reaches a model during training or inference. Controls execute at machine speed, not through manual review queues.

Case study: SurveyMonkey migrated from OneTrust to Ethyca, cutting DSAR cycles from 7 days to 48 hours. Their team now uses Fides as their source of truth for governance metadata across 4 petabytes of survey data serving 40M+ users. As Craik Pyke at SurveyMonkey put it: "The reason we chose Ethyca is still true today. Which is, it was a partnership, not one vendor selling to someone."

Best for: Organizations investing in consent as infrastructure, with engineering teams capable of integrating governance into CI/CD pipelines and mature data architectures.

Limitations: Ethyca is designed for teams building consent and governance into their data infrastructure, not for organizations looking for a plug-and-play consent banner tool.

Integration requires engineering investment and access to the data layer, which means teams without dedicated privacy engineering resources may face a steeper onboarding curve.

2. Credo AI

Founded in 2020 and headquartered in Palo Alto, Credo AI is a purpose-built AI governance platform that inventories AI use-cases across the organization, maps them to applicable regulatory frameworks, and automates evidence generation for audit readiness. Recognized as a Leader in Forrester's AI Governance Solutions Wave (Q3 2025), the platform is used by Fortune 500 companies and integrates with Microsoft, IBM, and Databricks.

Key capabilities:

- AI discovery including shadow AI detection across the organization

- Risk assessment across bias, security, and privacy dimensions

- Pre-built policy packs for the EU AI Act, NIST AI RMF, ISO 42001, SOC 2, and HITRUST

- Automated evidence generation for audit readiness

Integrations: Microsoft, IBM, Databricks, and McKinsey partnerships. Available on AWS and Azure Marketplace.Notable clients: Mastercard, PepsiCo, Cisco, Autodesk, Chevron, Booz Allen, and Northrop Grumman.

Best for: Organizations that need model-level governance with strong regulatory framework coverage out of the box, particularly teams looking for pre-built policy packs rather than building custom enforcement infrastructure.

Limitations: Governance operates primarily at the model level. Less depth at the data layer, where consent status and legal basis are determined.

3. IBM watsonx.governance

Part of IBM's broader watsonx AI and data platform, IBM watsonx.governance provides enterprise-grade monitoring and lifecycle management for AI models, including those built outside the IBM ecosystem. The platform tracks fairness, quality, and explainability across the model lifecycle and launched Agent Monitoring in Q1 2026 to extend oversight to agentic AI systems.

Key capabilities:

- Fairness, quality, and explainability monitoring with built-in bias detection

- Agent Monitoring for agentic AI oversight (launched Q1 2026)

- Multi-model support across OpenAI, AWS, and Meta models

- Lifecycle governance from development through production

Integrations: AWS (Amazon SageMaker), Microsoft Azure, OpenAI, and Meta models. Supports hybrid cloud and on-premises deployment. Available on AWS Marketplace and Azure Marketplace.

Notable clients: Banco do Brasil, Infosys, USTA (US Open), Deloitte, and EY.

Best for: Financial services organizations and companies deep in the IBM ecosystem. Strong fit for enterprises running agentic AI at scale.

Limitations: Complex deployment, particularly for mid-market organizations. Model-centric governance; less emphasis on the data feeding those models.

4. OneTrust

Headquartered in Atlanta, OneTrust is the category incumbent in privacy and GRC, serving half the Fortune 500. The platform expanded into AI governance with new capabilities announced in March 2026, adding real-time monitoring and agent detection to its existing privacy and compliance infrastructure.

Key capabilities:

- AI use case intake and approval workflows

- Unified asset inventory across privacy, security, and AI systems

- Real-time monitoring and agent detection (new, March 2026)

- NIST AI RMF alignment

- Integrations with AWS, Azure, and Databricks

Integrations: AWS, Azure, Databricks, Snowflake, Microsoft Purview, ServiceNow, Jira, Salesforce, and Okta.

Notable clients: Lumen Technologies, Zendesk, Blackbaud, and Iberia Airlines, alongside more than half of the Fortune 500.Best for: Large enterprises already using OneTrust for privacy or GRC that want to extend their existing investment into AI governance without adopting a separate platform.

Limitations: Historically workflow-driven. The platform's governance model centers on UI-driven processes and approval queues rather than runtime enforcement. Not engineering-native.

5. Microsoft Purview

Microsoft Purview is Microsoft's unified data security and governance platform, extending its existing data protection capabilities into AI oversight. Rather than operating as a standalone AI governance tool, Purview embeds governance controls directly into the Microsoft ecosystem, covering how sensitive data flows through M365, Azure, and Copilot interactions.

Key capabilities:

- Data Security Posture Management (DSPM) for AI

- Sensitive information protection applied to AI prompts and responses

- Copilot Control System for managing AI assistant behavior (launching April 2026)

- DLP policies extended to AI interactions across the Microsoft stack

Integrations: Native across the Microsoft ecosystem including M365, Azure, Copilot, and Microsoft Fabric. Also extends to third-party AI apps such as ChatGPT and Google Gemini through browser-level monitoring.

Notable clients: Grupo Bimbo, Fannie Mae, and broadly adopted across Microsoft's enterprise customer base, including organizations on M365 E5 licensing.

Best for: Organizations already committed to the Microsoft ecosystem (M365, Azure, Copilot). AI governance capabilities are bundled into E5 licensing or available as add-ons.

Limitations: Limited governance reach outside Microsoft's ecosystem. Oriented more toward data security than full AI lifecycle governance. Several core capabilities are still rolling out through 2026.

6. Securiti

Headquartered in San Jose, Securiti positions itself as a "Data Command Center," combining AI governance with data security, privacy, and compliance in a single platform. The approach is breadth-first: rather than focusing on one governance dimension, Securiti aims to give organizations a unified view across multi-cloud environments, tracking how data moves from storage through to AI model consumption.

Key capabilities:

- AI model discovery across multi-cloud environments

- Data-to-AI lineage mapping showing how training data flows into models

- Alignment with 20+ regulatory frameworks

- OWASP Top 10 protections for LLMs

Integrations: AWS (including Amazon Bedrock and Amazon Q), Azure, GCP, Snowflake, Databricks, and hybrid on-premises environments.

Notable clients: Walker & Dunlop and Sanofi. Broadly adopted across Fortune 500 enterprises, though specific client names are not widely disclosed.Best for: Organizations running AI workloads across multiple cloud providers that need a single platform covering data security, privacy, and AI governance rather than stitching together separate tools.

Limitations: Breadth of coverage can come at the cost of depth in any single governance dimension. Complex initial setup.

7. TrustArc

Headquartered in San Francisco and recognized as a G2 Leader in privacy management, TrustArc is a privacy-first platform that has extended its existing compliance and risk assessment capabilities into AI governance. The platform's strength is regulatory breadth: it maps AI risk assessments to 130+ standards across multiple jurisdictions, making it a natural fit for organizations that already manage privacy through TrustArc and want to bring AI under the same umbrella.

Key capabilities:

- AI risk assessments mapped to 130+ regulatory standards

- Multi-jurisdictional compliance support

- Benchmarking tools for measuring governance maturity against peers

- Automated DSR processing

Integrations: Not publicly detailed for AI governance specifically. The platform operates primarily through its own PrivacyCentral interface and assessment workflows.

Notable clients: DoubleVerify, Integral Ad Science, and Fortune 100 enterprises. Specific AI governance client names are not widely disclosed.Best for: Privacy and compliance teams already using TrustArc that need to extend their existing workflows to cover AI risk, particularly across multiple jurisdictions.

Limitations: AI governance is an extension of privacy management, not a purpose-built capability. High manual overhead persists in assessment workflows. Not developer-native.

8. Holistic AI

Headquartered in London, Holistic AI is an end-to-end AI governance platform that covers the full lifecycle from discovery through continuous monitoring. What sets it apart from other newer entrants is its use of Guardian Agents, autonomous monitoring systems that continuously test AI models for bias, risk, and hallucination without manual intervention. The platform has particular depth in NYC Local Law 144 compliance, making it one of the few platforms with specialization in US municipal AI regulation alongside broader EU AI Act, NIST, and ISO 42001 alignment.

Key capabilities:

- Shadow AI discovery across the organization

- Continuous bias, risk, and hallucination testing

- Guardian Agents for autonomous, ongoing model monitoring

- EU AI Act, NIST, ISO 42001, and NYC LL144 compliance alignment

Integrations: Read-only integrations across cloud infrastructure, code repositories, data systems, and enterprise tools. Designed for non-disruptive deployment across existing AI stacks.

Notable clients: Unilever, MindBridge, and Wikipedia (world-first independent audit under the Digital Services Act). Also serves Fortune 500 companies and government bodies, though specific names are not widely disclosed.Best for: Organizations looking for continuous, automated model monitoring with strong regulatory coverage, particularly those needing NYC LL144 compliance alongside broader international frameworks.

Limitations: Newer entrant with a smaller install base. Less depth at the data layer.

Core Features of an AI Governance Platform

These five capabilities separate infrastructure-grade governance from surface-level tooling. Each one reveals whether a platform enforces policy or merely documents it.

AI Model Inventory and Registry

You cannot govern what you cannot see. 70% of employee AI interactions happen through embedded SaaS features, not standalone tools. Only 18.5% of employees are even aware of their company's AI policies. A governance platform needs continuous discovery, not a one-time static inventory that decays the moment a team adopts a new tool.

Ethyca's Helios provides this continuous discovery layer, maintaining a live inventory of data assets, their classification, and their risk context across all connected systems.

Risk Assessment and Classification

The EU AI Act defines four risk tiers for AI systems. ISO 42001 provides the first certifiable standard for AI management systems. NIST AI RMF structures risk across four functions: Govern, Map, Measure, and Manage.

The critical distinction most platforms miss: classifying the risk of the AI system is only half the problem. The data feeding that system carries its own risk profile, including consent status, legal basis, and purpose restrictions. Platforms that assess model risk without assessing data risk leave a structural gap.

Policy Enforcement at the Point of Data Use

This is where the infrastructure vs. tooling distinction becomes concrete. Most platforms generate alerts or require manual approval when a policy violation is detected. Infrastructure-grade governance enforces policy at runtime, before non-compliant data reaches a model or analytics pipeline.

Ethyca's Astralis operates at this enforcement layer: field-level, purpose-specific controls that execute automatically at the point of data use.

Audit Trail and Compliance Reporting

The EU AI Act's Article 50 transparency requirements activate in August 2026. Regulators will expect producible audit trails showing what data an AI system consumed, under what authority, and with what controls applied. For instance, SurveyMonkey's team uses Fides as their "source of truth" for governance metadata, making audit readiness a byproduct of daily operations rather than a quarterly scramble.

Regulatory Framework Alignment

The regulatory surface area is expanding on every front: EU AI Act, NIST AI RMF, ISO 42001, GDPR, HIPAA, the Colorado AI Act, the NAIC Model Bulletin (now adopted by 24 US states), and NYC LL144. By 2030, Gartner projects 75% of world economies will have AI-specific regulation in place.

A governance platform must map controls to multiple frameworks simultaneously. Single-framework alignment is a liability for any organization operating across jurisdictions. Ethyca maintains a regulatory landscape tracker covering global privacy and data regulation through 2026 and beyond, alongside detailed GDPR compliance documentation for teams operating under EU data protection requirements.

How to Select the Right AI Governance Platform as a Business

Five evaluation criteria separate a productive investment from an expensive shelf product.

1. Map your AI use cases and data obligations first: Before evaluating any platform, document where AI consumes data, what consent or legal basis governs that data, and where traditional data mapping falls short. As Ramp's Michael Razeeq put it: "I wanted a system our engineers would be excited to use and build on. That's how you create lasting ownership."

2. Distinguish model governance from data governance: Some platforms govern the model: bias testing, explainability scores, risk classification. Others govern the data feeding the model: consent status, purpose restrictions, legal basis. The strongest platforms cover both. Know which problem you are solving.

3. Evaluate enforcement depth, not just visibility:

This is the single most important question in any vendor evaluation. Two platforms can both claim "AI governance" and look similar on a feature checklist, but behave completely differently when a policy violation occurs. The table below captures the core distinction:

Ask each vendor one question: what happens when a policy violation is detected? If the answer is "an alert is generated," you are buying a monitoring tool. Visibility without enforcement is monitoring, not governance.

4. Assess integration with your existing infrastructure: Governance that sits outside your data stack creates friction. The platform should integrate with your databases, cloud providers, SaaS tools, and CI/CD pipelines natively. For financial technology company Ramp, the decisive factor was finding governance that engineers would build on, not work around.

5. Plan for emerging regulatory obligations: The August 2026 EU AI Act deadline is the next major milestone, but it will not be the last. Evaluate whether a platform can adapt to new frameworks without requiring a rebuild of your governance architecture. New AI regulations will come into place as more countries start putting them in place, and existing regulations will continue to evolve; a platform locked to one framework creates compounding technical debt with each new mandate.

Why Businesses Choose Ethyca for AI Governance Needs

Most platforms in this guide monitor AI risk. Ethyca enforces against it. Here is what that looks like in practice.Privacy-as-Code via Fides: Legal requirements are codified into machine-readable policy using Fides, the most-used open-source privacy engineering toolkit. Engineers implement governance the same way they implement any other system requirement. The IAPP called it directly: "The possibilities afforded by Fides developer tools are astonishing."

Runtime enforcement, not post-hoc review: Astralis enforces policy at the moment data is accessed. This is not an alert system. It is a control plane that prevents non-compliant data from reaching AI models.

Full-stack coverage: No other platform covers the complete chain: discover data (Helios), enforce consent (Janus), automate rights (Lethe), and govern AI (Astralis), all under one taxonomy (Fides).

Open source foundation: Fides is fully inspectable on GitHub. Engineering teams trust what they can audit.

Forward-deployed engineering: Ethyca works as a technical partner, not a SaaS vendor. For complex architectures like those at The New York Times and Ramp, this model unlocks governance depth that self-serve tools cannot reach.

The results are measurable: 744M+ preferences processed annually, 4M+ access requests handled, $74M+ saved through automation, and 200+ global brands running on the platform.

Talk to Ethyca's team to evaluate how governance as infrastructure applies to your AI stack.

.jpeg?rect=801,0,3198,3198&w=320&h=320&fit=min&auto=format)

.jpeg?rect=270,0,2160,2160&w=320&h=320&fit=min&auto=format)

.png?rect=0,3,4800,3195&w=320&h=213&auto=format)

.png?rect=0,3,4800,3195&w=320&h=213&auto=format)