What is AI governance? A complete guide for 2026

AI governance is the operating framework for approving, monitoring, and controlling AI systems with continuous, audit-ready evidence. It defines who can make decisions about AI, what evidence those decisions must produce, and how controls are enforced across the full lifecycle: from use-case approval and data access through change control, monitoring, incident response, and audit-ready documentation.

INTRODUCTION

Key takeaways

- What it is: AI governance is the operating framework for approving, monitoring, and controlling AI systems with continuous, audit-ready evidence.

- Why it matters now: Regulators, auditors, and enterprise buyers require proof of AI controls, not policies, evidence.

- Why data governance isn't enough: Data governance controls storage and access. AI governance controls use: training, inference, automated decisions.

- Where programs fail: Unclear ownership, missing visibility, manual reviews, unenforced data boundaries, and documentation without evidence.

- What works: Enforceable controls at the infrastructure layer, continuous monitoring, and evidence produced as a byproduct of operations.

Overview

- Key takeaways

- What is AI governance?

- What does AI governance cover?

- AI governance frameworks: NIST, ISO, and standards

- What AI governance is not

- AI governance in the context of privacy and global regulations

- Whyy does AI governance now sit in compliance?

- How is AI governance different from data governance?

- What are the most common AI governance challenges and how to overcome them?

- AI governance framework: 10-step checklist

- What's next for AI governance

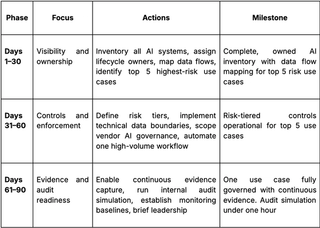

- Quick 30/60/90-day ai governance starter plan

- FAQs

When an AI system produces a discriminatory outcome, the first question is never “what model was it.” The question is: who is accountable, and can you prove what happened?

That question used to surface only during crises: a regulator investigation, a public incident, a legal challenge. Today, it shows up far earlier. In deal rooms; on procurement reviews; during audit calls.

A buyer sends a security and compliance questionnaire, and suddenly half the questions are AI. Where is AI used? What data does it touch? Who approved it? How do you monitor drift? What happens when the system is wrong?

The difference between these scenarios is timing. But the question is always the same: can you demonstrate control? Not describe your approach, demonstrate it, with documented approvals, enforceable controls, and audit-ready evidence that holds up under scrutiny.

Most organizations can answer with principles. Very few can answer with proof. That gap is where deals stall, regulatory exposure grows, and AI programs quietly become liabilities instead of advantages. Closing that gap is what AI governance actually exists to do.

This guide breaks down what AI governance looks like in practice: what it means operationally, why it's now a compliance function, and what a workable program must produce to stand up to real scrutiny.

What is AI governance?

AI governance is the operating framework that determines how AI systems are approved, deployed, monitored, and retired inside an enterprise. It encompasses the policies, technical controls, and oversight mechanisms that produce continuous, audit-ready evidence across the full AI lifecycle, from use-case approval through production monitoring and incident response.

Most programs fail here for a familiar reason: they confuse governance with policy.

According to our research, 80% of AI projects fail, roughly twice the failure rate of traditional IT projects.1 The root cause is that most organizations "do not have adequate infrastructure to manage their data and deploy completed AI models." A PDF, an ethics committee, or a model card doesn't enforce anything in production. AI governance only works when it governs the real operating surface — the infrastructure where data flows, decisions are made, and risk actually lives.

In enterprise environments, that means creating structure around decisions that would otherwise stay ambiguous: who can approve a use case, what data the model is allowed to access, how updates are reviewed, and what signals teams must monitor once a system is in production.

Because AI shifts constantly, vendors push updates, prompts get rewritten, data gets reused — governance has to operate as a continuous control loop. It updates when the AI system updates. It escalates when risk increases. It documents what happened as it happens, not after the fact.

Which brings us to the next question: what exactly lives on that operating surface, and what does a complete AI governance scope actually include?

What does AI governance cover?

Once you move past principles and policy statements, AI governance governs the surface area, the approvals, data flows, updates, and outcomes that shape how an AI system behaves every day.

It begins with:

- Use-case approval and risk tiering — deciding what’s allowed, restricted, or prohibited.

- Data permissions and purpose limits — what the model can see, why it can see it, and how that’s enforced.

- Vendor/model intake — reviewing third-party systems, retention terms, subprocessors, and silent model updates.

- Deployment gates and change control — versioning, prompt updates, retraining triggers, and when something requires re-approval.

- Continuous monitoring — drift, bias signals, anomalous behavior, safety flags, and performance changes.

- Incident response — how issues are detected, triaged, fixed, and documented.

- Exceptions and risk acceptance — who approved the exception, what conditions apply, and when it expires.

But what framework should an enterprise actually build this on? The answer starts with two resources that together define the operational standard for AI governance.

AI governance frameworks: NIST, ISO, and standards

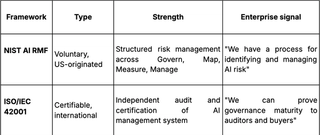

Enterprises building an AI governance program don't start from scratch. Two frameworks now anchor how organizations structure AI risk management and demonstrate governance maturity: the NIST AI Risk Management Framework (AI RMF) and ISO/IEC 42001.

NIST AI Risk Management Framework (AI RMF 1.0)

Released in January 2023 by the National Institute of Standards and Technology (NIST), the AI RMF is the leading US-originated framework for AI risk management.2 It is voluntary, sector-agnostic, and designed to be operationalized by organizations of all sizes.

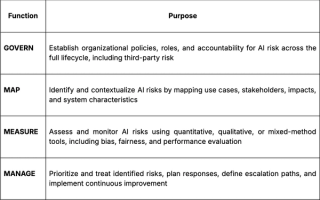

The framework is organized around four core functions:

GOVERN applies across all AI risk management processes. MAP, MEASURE, and MANAGE are applied to specific AI systems at specific lifecycle stages.

What makes the AI RMF operationally significant for enterprises is its emphasis on AI as socio-technical: risks emerge from models and data, along with how people build, deploy, and use them. This aligns directly with the governance challenges covered later in this guide: ownership gaps, missing visibility, and controls that don't keep pace with how systems actually operate.

The AI RMF also provides a Playbook, Crosswalk (mapping to other frameworks), and a Generative AI Profile (NIST AI 600-1, released July 2024) that addresses risks specific to generative AI systems.3

For enterprises mapping AI governance to existing compliance programs, the AI RMF integrates naturally with NIST's Cybersecurity Framework and Privacy Framework, enabling organizations to extend existing risk management processes to cover AI without building a parallel governance structure.

ISO/IEC 42001: Certifiable AI Management System

Where the NIST AI RMF provides structure, ISO/IEC 42001 provides certification. Published in 2023, it is the world's first certifiable AI management system standard, which means organizations can be independently audited and certified against it.

This matters for procurement. Enterprise buyers are beginning to reference ISO 42001 alongside SOC 2 and ISO 27001 in vendor assessments. Organizations that hold certification can demonstrate governance maturity in a format procurement teams already understand.

Certifications are accelerating:

- KPMG International became the first Big Four entity to achieve certification (late 2025)4

- Microsoft certified M365 Copilot

- Synthesia, used by 70% of Fortune 100 companies, certified to demonstrate responsible AI to enterprise buyers

How NIST AI RMF and ISO 42001 work together

Most mature AI governance programs use both: the NIST AI RMF to structure internal risk management processes, and ISO 42001 to certify and demonstrate those processes externally. The frameworks are complementary, not competing.

What AI governance is not

Short answer: It’s not a policy deck, an ethics committee with no authority, or data governance dressed up with new vocabulary. AI introduces drift, automation, and high-stakes decisions at scale, so the governance model has to match that reality with controls you can actually enforce, and evidence you can actually defend.

That operating surface is expanding, and the reason it's expanding is that privacy regulation and AI regulation are converging on the same set of demands.

AI governance in the context of privacy and global regulations

Privacy law has always revolved around two expectations: control and proof.

What data did you use? Why were you allowed to use it? Who had access? What safeguards were applied? AI complicates these expectations in ways traditional data programs were never designed to handle.

The shift from “data-at-rest risk” to “data-in-motion risk”

Privacy programs historically focused on storage, access, and retention. But AI creates risk in motion: when data is reused for new purposes, when models infer sensitive attributes from seemingly harmless inputs, and when automated decisions move faster than human oversight.

This is why regulators now expect enterprises to trace how sensitive data travels through an AI system end-to-end, not only the data they hold, but the decisions that data fuels.

Why global regulators are converging on the same expectations

Even though privacy and AI laws differ across regions, the underlying demand is the same: show your work.

The EU AI Act moves from guidance to enforceable operational requirements that tighten based on system risk.5 The Colorado AI Act, US state privacy laws, and sector-specific regulations point to the same requirement: if you can’t show what data enters an AI system, the legal basis for using it, and the controls applied, you won’t meet regulatory expectations.

Ramp encountered this as it scaled past $100B in payments.6 Traditional compliance tools couldn’t govern complex B2B financial data or keep pace with emerging AI regulation. Ramp responded by treating privacy as engineering infrastructure: automating data rights fulfillment, standardizing data classification through the Fides taxonomy, and implementing a governance layer that supports regulatory requirements across jurisdictions.

Enterprises already doing this treat regulatory convergence as a current operational requirement.

When AI affects people, governance failures become exposure

Once automated decisions influence hiring, housing, credit, eligibility, fraud detection, or safety, the stakes change. Regulators no longer treat governance gaps as internal process issues. They treat them as external harm.

And when harm is alleged, the question is direct: Can you explain how the system was approved, what data it relied on, and what safeguards shaped its behavior? If the answer is no, regulators assume the worst. Intent does not matter, only evidence does.

This pressure is already arriving through auditors, procurement teams, customers, and partners, often before any regulator gets involved. AI governance has quietly become a trust prerequisite, and that convergence is why it's no longer an advisory function.

Why does AI governance now sit in compliance?

Artificial intelligence governance didn’t migrate into compliance because of one regulation or one dramatic failure. It migrated because the questions enterprises now face about AI are compliance questions, and always have been.

When a regulator asks how a dataset was approved for model training, that’s compliance. When an auditor asks whether consent boundaries were respected during inference, that’s compliance. When procurement asks for evidence that vendor AI cannot access restricted data categories, that’s compliance. And when the board asks whether the organization can defend its AI controls under scrutiny, that’s compliance too.

What changed isn’t the nature of the questions. It’s the frequency and the stakes.

AI accelerates how data is consumed, reused, and acted upon. Data approved for analytics gets repurposed for training. A vendor enables an AI feature that processes data under terms the original consent didn’t anticipate. A model retrains on inputs that no longer match the purpose boundaries defined at approval. Each of these is a compliance gap, not because the AI misbehaved, but because controls over data use didn’t keep pace with how data was actually consumed.

That’s why governance-as-policy can’t survive scrutiny. When data use changes continuously through model updates, vendor changes, and new workflows, compliance requires continuous evidence: approvals are current, purpose boundaries held, consent is enforced at the point of use, and exceptions are documented and time-bound.

When governance operates as a compliance function, with enforceable data boundaries, change control, and audit-grade evidence, two things happen.

- One: issues are caught before they scale across customers, markets, or business lines.

- Two: organizations can prove control to regulators, auditors, and enterprise buyers, which is now table stakes for selling into regulated sectors.

That proof is what separates organizations that talk about responsible AI from those that can close deals in regulated markets. But here's where most enterprises hit the next wall: they assume their existing data governance program already provides this proof.

It doesn't. Here's why.

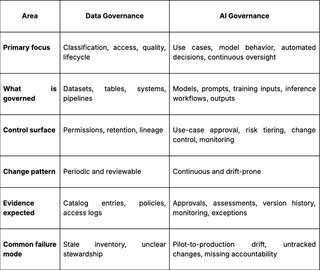

How is AI governance different from data governance?

If your organization has a mature data governance program, you might assume the hardest work is done. Data is classified, access is controlled, retention policies are enforced, and the infrastructure is in place.

That assumption is where the gap opens.

Data governance controls how data is stored, classified, and access-controlled. AI governance controls how data is used in training, inference, and automated decisions. An organization can have every data governance control in place and still face significant AI governance exposure, because data governance was never designed to govern what happens when data enters an AI workflow.

LinkedIn's €310 million GDPR fine illustrates this precisely.7 In October 2024, Ireland's Data Protection Commission found that LinkedIn processed member data for behavioral advertising and targeted content without valid legal basis. The data itself was properly classified and stored; data governance was functioning. But LinkedIn fed behavioral signals: engagement patterns, scroll behavior, content interactions, into algorithmic systems that inferred sensitive characteristics like likelihood of job change or professional burnout, then used those inferences for ad targeting. Consent was neither specific nor informed. Legitimate interest was outweighed by users' rights. Contractual necessity didn't apply.

Which means that the data was governed; the use of that data was not.

That's the gap:

- Data governance asks: Is this data classified, stored correctly, and access-controlled?

- AI governance asks: Is this data being used in a way that's authorized, purpose-bound, and defensible, and can you prove it?

When data moves from storage into inference, training, or algorithmic decision-making, new questions arise that data governance was never designed to answer: Was this dataset approved for this specific use case? Does the original consent basis cover this new purpose? Who authorized the change? What happens when the model is updated and the data's context shifts?

Without controls at the point of data use, the gap between what's documented and what's enforced grows every time a new model, pipeline, or vendor is added.

Data governance is a prerequisite. AI governance is what ensures data is used the way it was authorized to be used. And the distance between those two, between storage controls and use controls, is where most enterprise AI governance programs break down.

Let’s look at exactly where.

What are the most common AI governance challenges and how to overcome them?

Every enterprise AI governance program we've seen hits the same walls. Not because teams don't care, but because governance is built like a policy layer while AI operates like a production system. Here are the five failure modes that show up again and again, and what it looks like when they're solved.

1. Ownership is unclear, so accountability defaults to no one

In many organizations, AI work is spread across data science, product, legal, privacy, security, and procurement. Everyone owns a slice; no one owns the outcome. When an automated decision becomes contested, responsibility dissolves.

The Case of Mobley v. Workday: Who's accountable when AI screens applicants? Mobley v. Workday, Inc., shows what this looks like when it reaches the courts.8 Workday's AI-powered applicant screening tools were used by thousands of employers to filter job candidates. When the system was alleged to disproportionately reject applicants based on age, race, and disability, the core question wasn't whether the AI was biased. It was who was accountable for the outcome.

Workday argued it was a software provider. The employers assumed the vendor was handling compliance. The court allowed the case to proceed on the theory that Workday acted as an "agent" of the employers, meaning both vendor and enterprise could share liability. In May 2025, the case was certified as a nationwide collective action, potentially implicating hundreds of millions of applicants.

The pattern matters here. When ownership of an AI system's behavior is distributed without structure, liability doesn't concentrate. It spreads. And when regulators or courts come looking, they don't accept "that was another team's responsibility" as a defense.

How to fix it

Assign explicit lifecycle ownership so responsibility cannot vanish under pressure.

- A named owner for each AI use case

- A named owner for the model in production

- A named owner for data inputs and purpose boundaries

- A named owner for monitoring, incidents, and corrective action

Ownership doesn't mean one person does everything. It means one person is accountable for ensuring it gets done, and can prove it.

2. No visibility into where AI is actually used

You can’t govern what you can’t see. Most enterprises underestimate how much AI is already live, because AI often arrives through vendors, defaults, and local team experiments that never went through formal approval.

When asked where AI is running and what data it touches, many organizations simply cannot answer.

This isn’t an edge case. RAND Corporation research found that the primary driver of AI project failure isn't model performance; it's that most organizations lack the infrastructure to manage their data and deploy completed models. The failure is the absence of the governance layer that would make deployment sustainable.

How to fix it

Treat AI discovery like an inventory and enforcement problem.

- Maintain a living inventory of AI use cases tied to change workflows

- Capture ownership, purpose, data categories, vendor/model details

- Tie inventory updates to change workflows so new AI cannot appear “off-books”

3. Manual reviews don’t scale, so teams bypass governance or stop shipping

If approvals are slow or unpredictable, teams adapt. Some bypass governance to meet deadlines; others avoid proposing new use cases entirely. Both outcomes are governance failure.

In AI governance, "manual review" is a chain of bottlenecks:

- A data access request that takes two weeks because a compliance team has to evaluate purpose and consent from scratch every time.

- A use-case approval that requires multiple meetings because there's no predefined risk tier.

- A DSAR that takes seven days because each request is routed, reviewed, and fulfilled by hand.

- A vendor assessment that stalls because there’s no structured process for evaluating what data a third-party AI feature can access.

When every decision requires human-in-the-loop review with no standardized criteria, governance becomes the thing teams work around, not the thing that enables them to ship.

The Case of Louis v. SafeRent Solutions: What happens when bias testing doesn't exist?

Louis v. SafeRent Solutions shows what happens when informal oversight reaches a high-stakes domain.9 SafeRent's algorithmic tenant screening tool produced scores used by landlords to accept or reject rental applicants. The algorithm relied heavily on credit data and did not account for housing vouchers, resulting in disproportionately lower scores for Black and Hispanic applicants. Landlords had no visibility into how scores were calculated. SafeRent's own process lacked the structured review: validated data inputs, documented purpose boundaries, bias testing, that could have caught the discriminatory pattern before it scaled across thousands of housing decisions.

The case settled in 2024 for over $2.2 million, with SafeRent required to remove its scoring feature for voucher holders and submit any future scoring models to third-party validation.

The lesson for enterprise AI governance is direct: when reviews are ad hoc, they don't just slow teams down; they fail to catch the issues they exist to prevent.

How to fix it

Create a predictable, risk-tiered review model.

- Classify AI use cases by risk

- Map each tier to predefined controls, depth of review, and required evidence

- Allow low-risk use cases to move quickly with lightweight gates

- Automate whatever can be automated like DSAR fulfillment, data access approvals, consent evaluation, so human review is reserved for decisions that require judgment

4. Data boundaries exist on paper, and vendor AI makes it worse

A policy that says "this dataset is approved for analytics only" is meaningless if nothing in the infrastructure prevents that dataset from being pulled into a training pipeline. When data boundaries are documented but not enforced, every new model, pipeline, or integration is an opportunity for boundary violations that no one detects until a regulator or auditor asks.

This gap widens further with vendor AI. Third parties ship AI features rapidly, sometimes enabling them by default. Data that was never meant for automated processing flows into external models. Governance breaks quietly, usage changes before your controls do. And when a vendor pushes a model update, the original risk assessment may no longer reflect what the system does with your data.

The Case of Italy v. OpenAI: When data boundaries aren't enforced

Italy's action against OpenAI is the clearest illustration of where this leads.10 In December 2024, Italy's Garante fined OpenAI €15 million after concluding that personal data had been used to train ChatGPT without adequate legal basis or transparency. The violations weren't about model behavior but about data use. OpenAI could not demonstrate a valid legal basis for processing personal data for training. It had not met GDPR transparency obligations to data subjects. It lacked adequate age verification safeguards. The Garante had already temporarily banned ChatGPT in March 2023 for the same fundamental concern: data was being consumed in ways that existing controls never authorized.

The enterprise pattern: A dataset gets approved for one purpose, then gets pulled into a training or inference pipeline, internally or by a vendor, without re-evaluation. The original consent or legal basis no longer covers the new use, but no technical control prevents it. The documentation says the boundary exists, but the infrastructure doesn't enforce it.

How to fix it

Govern data-in-use, and pull vendors fully into your AI governance scope.

- Specify allowed purpose, data boundaries, and workflows in every AI approval

- Enforce those boundaries through technical controls, not documentation

- Ensure misuse is prevented early, not discovered through an investigation

- Require an AI-specific vendor assessment (data use, retention, subprocessors)

- Define internal enablement rules: who can turn features on, and with which data categories

5. Documentation exists, but evidence does not

This is the failure mode that collapses everything else. An organization can have policies for every challenge above — ownership defined, inventory maintained, reviews structured, boundaries documented, and still fail under scrutiny if none of it produces evidence.

Most organizations can show policies. Fewer can show proof that controls actually operated over time. During an audit or investigation, the questions are specific: Show me the approval for this use case. Show me the risk assessment. Show me what changed between v1.2 and v1.3. Show me the monitoring output from the last six months. Show me who authorized the exception and when it expires.

If the answer to any of these is "we'd need to pull that together," the program has a gap, regardless of how comprehensive the policies look.

This is also where the gap between governance programs becomes most visible to enterprise buyers. When a procurement team sends an AI governance questionnaire, they're not asking whether you have policies. They're asking whether you can produce the artifacts. The organizations that can produce them on demand close deals faster. The organizations that need two weeks to assemble documentation are the ones that face follow-up questions, delayed timelines, or disqualification.

How to fix it

Adopt an evidence-first operating model.

- Map every governance requirement to a policy, an enforceable control, and automatic evidence that the control operated

- Capture approvals, changes, monitoring outputs during normal operations; don’t reconstruct them before audits

- Log exceptions with an owner, conditions, time-bound expiration, and review schedule

- Keep the evidence trail continuous and machine-readable

These five challenges are showing up everywhere. And the bar is about to go higher.

AI Governance Framework: 10-Step Checklist for Scalable Programs

- Assign a named owner for every AI use case, covering approval, data inputs, monitoring, and incident response

- Maintain a living AI inventory so no system goes live without registration, risk classification, and ownership

- Classify use cases by risk tier and map each tier to predefined controls, review depth, and evidence requirements

- Enforce data boundaries technically at the point of consumption

- Govern vendor AI under the same controls, audit requirements, and contractual terms as internal systems

- Automate repeatable governance workflows (DSARs, access approvals, consent checks) so human review covers judgment calls only

- Monitor production AI continuously for drift, bias, and performance changes with defined thresholds and escalation paths

- Generate audit evidence automatically as a byproduct of governance operations

- Log every exception with a named owner, documented conditions, and a time-bound expiration

- Ensure audit artifacts: approvals, assessments, change logs, monitoring outputs, are producible on demand in minutes

What’s next for AI governance

The challenges above are intensifying. The standard for what counts as "AI governed" is rising across audits, procurement, and regulation simultaneously, and the timeline is shorter than most enterprises assume.

Audits are shifting from checklists to lifecycle evidence

Deloitte Australia was forced to refund part of an AU$440,000 government contract in 2025 after AI-generated fabrications (fabricated citations and non-existent court references) were included in a 237-page report delivered to Australia's Department of Employment and Workplace Relations.11 Deloitte had used GPT-4o but failed to detect the errors before delivery and did not disclose its AI use until after discovery.

The emerging audit standard is not "do you have a policy?" It's "can you show that controls were active when this output was produced?" Programs that can't produce artifacts on demand: approvals, risk assessments, monitoring outputs, will be treated as incomplete regardless of intent.

Boards oversight is accelerating and funding follows

PwC's 2024 Annual Corporate Directors Survey found that 57% of directors said the full board now has primary oversight of AI, with another 17% assigning it to the audit committee. State lawmakers introduced nearly 700 AI-related bills in 2024, with 113 signed into law.12

When boards ask "can we defend our AI controls?" and the answer requires reconstructing evidence after the fact, governance gets funded. The organizations that can walk leadership through a structured inventory: use cases mapped to risk tiers, data boundaries, and monitoring evidence, get budget and velocity.

Regulatory enforcement is already live

You don't need a single "killer law" for enforcement to become real. It already is:

- EU AI Act: GPAI transparency requirements effective August 2025. High-risk obligations phasing through 2027. Penalties up to €35M or 7% of global revenue.

- Colorado AI Act: Effective 2026. Disclosure and impact assessment for high-risk AI in consequential decisions.13

- NAIC Model Bulletin: Adopted by 24 US states. Documented governance, bias controls, vendor oversight, audit-ready decision logs.14

- NYC Local Law 144: In force. Bias audits required for automated hiring tools, results published.15

The convergence is on a shared expectation: organizations using AI at scale must demonstrate governance with risk assessments, data boundaries, monitoring, and documented accountability. Build with these frameworks now; the organizations that wait will build under pressure.

Also Read: How global privacy and regulatory landscape is changing in 2026

How Ethyca operationalizes AI governance

Every challenge in this guide traces back to the same structural gap: governance controls that stop at the policy layer and never reach the infrastructure where data is actually consumed. Ethyca closes that gap.

Ethyca’s platform governs data at the point of use, not at the point of storage, in a policy document, or a quarterly review. When data enters an AI pipeline, a model, a dashboard, or an API query, Ethyca enforces the rules that were supposed to apply all along.

Challenge → How Ethyca solves it

Ownership unclear → Fides, Ethyca's open-source governance taxonomy, defines data categories, usage purposes, and accountability in machine-readable code. Legal defines the rules; engineering enforces them. Both work from the same control plane.

No visibility → Helios continuously discovers and classifies sensitive data across your stack, creating a live metadata layer mapped to regulatory requirements. A living system that reflects how your data actually moves.

Manual reviews don't scale → Lethe automates data subject rights end to end — intelligent routing, data discovery, policy-driven response logic. Human review is reserved for decisions that require judgment.

Data boundaries on paper → Astralis embeds enforceable policies directly into AI workflows. When a model, dashboard, or API query attempts to access data, Astralis checks purpose, consent, and legal basis in real time. If the purpose doesn't match, the access doesn't happen.

Documentation without evidence → Every policy decision across the platform: every access, every block, every exception, is captured automatically in machine-readable logs. Evidence is a byproduct of normal operations.

What this looks like at scale

SurveyMonkey faced the operational version of these challenges when expanding into financial services, healthcare, and government. Manual processes: hand-drawn data maps, spreadsheet-based RoPAs, seven-day DSAR cycles, couldn't scale to meet the compliance demands those sectors require.

With Ethyca, SurveyMonkey automated governance across four petabytes of sensitive data, cut DSAR fulfillment from seven days to 48 hours, and built a shared governance language across engineering and legal teams that maps directly to regulatory obligations.16

That's the difference between a governance program that produces policies and one that produces proof.

If your governance program can't answer an auditor's question in minutes, it's not infrastructure; it's documentation. Talk to us!

FAQs

FAQs

1. What evidence do auditors expect from an AI governance program

Auditors expect lifecycle evidence: documented approvals, risk assessments, purpose boundaries, change logs, monitoring outputs, and exception records, produced continuously.

2. Who is accountable for AI decisions in an enterprise?

Accountability must be explicitly assigned. As the Workday litigation demonstrates, when ownership is distributed without clear structure, liability expands rather than concentrating. Each AI system should have named owners for risk classification, data inputs, monitoring, and incident response.

3. What regulations apply to enterprise AI governance?

The EU AI Act, Colorado AI Act, GDPR, US state privacy laws, NAIC Model Bulletin (24 states), NYC Local Law 144, and sector-specific regulations all converge on the same expectation: organizations using AI at scale must demonstrate documented governance with enforceable controls.

4. What is the NIST AI Risk Management Framework?

The NIST AI RMF is a voluntary, sector-agnostic framework for managing AI risk, organized around four core functions: Govern, Map, Measure, and Manage. Released in January 2023, it is the leading US-originated AI risk management framework and integrates with NIST's Cybersecurity and Privacy Frameworks.

5. Can AI governance be scaled without slowing development teams?

Yes, when governance is embedded into infrastructure rather than layered on as manual review. Risk-tiered approval paths, automated DSR fulfillment, and technical enforcement where data is consumed allow low-risk use cases to move quickly while applying deeper oversight where stakes justify it.

6. How does ISO 42001 relate to AI governance?

ISO/IEC 42001 is the world's first certifiable AI management system standard. KPMG, Microsoft, and Synthesia have achieved certification. Procurement teams are beginning to reference it alongside SOC 2 and ISO 27001 in vendor assessments.

7. What should enterprises do first to build an AI governance program?

Start with visibility: inventory your AI systems, map what data they consume, and assign ownership. Then classify by risk tier, define enforceable controls for each tier, and implement continuous monitoring and evidence generation. Build governance into your data infrastructure from day one.

Our team of data privacy devotees would love to show you how Ethyca helps engineers deploy CCPA, GDPR, and LGPD privacy compliance deep into business systems. Let’s chat!

Speak with Us

.png?rect=534,0,2133,2133&w=320&h=320&fit=min&auto=format)

.png?rect=534,0,2133,2133&w=320&h=320&fit=min&auto=format)

_%20Why%20It%20Matters%20and%20How%20to%20Do%20It%20Right_Ethyca.png?rect=534,0,2133,2133&w=320&h=320&fit=min&auto=format)