Data Protection Impact Assessment (DPIA): Why It Matters and How to Do It Right

Regulators no longer ask whether you comply — they ask whether you assessed the risk before processing began. This guide covers when a DPIA is required, what it must contain, and how to build a process that holds up under audit.

_%20Why%20It%20Matters%20and%20How%20to%20Do%20It%20Right_Ethyca.png?rect=0,2,3200,2130&w=320&h=213&fit=min&auto=format)

Key Takeaways

Key takeaways

- A DPIA or Data Protection Impact Assessment is legally required under GDPR Article 35. It applies before any processing likely to result in high risk to individuals. Meeting two or more EDPB criteria typically triggers the obligation.

- Missing a required DPIA is a primary enforcement trigger. Healthcare organizations faced average penalties exceeding 200,000 euros per violation where DPIAs were absent.

- Privacy risks are widely considered cheaper to fix at design stage than after deployment. A DPIA moves risk identification to the earliest viable point in any project.

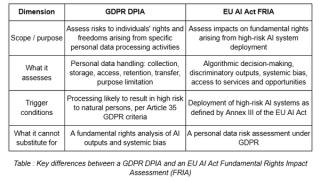

- AI systems create a dual compliance obligation: a GDPR DPIA and an EU AI Act Fundamental Rights Impact Assessment. These are distinct documents and cannot be satisfied by a single assessment.

Organizations that process personal data at scale are no longer evaluated by regulators only on whether they comply with data protection requirements. The evaluation has shifted to whether they can prove they assessed the risk before processing of data began.

Data Protection Impact Assessment (DPIA) is the mechanism that produces that proof. Required under the General Data Protection Regulation (GDPR) Article 35, a DPIA is a structured, documented analysis which covers:

- what personal data is involved

- why it is being processed

- whose data it is

- what could go wrong for the individuals, and

- what safeguards reduce that risk to an acceptable level.

Done correctly, it is a structured decision-guiding tool that surfaces privacy risks while they are still addressable.

What Is a Data Protection Impact Assessment (DPIA)?

As mentioned above, a Data Protection Impact Assessment is a formal, structured process for evaluating the privacy risks of a data processing activity before it begins.

GDPR Article 35 codified the DPIA requirement for processing likely to result in high risk. The regulation identifies three categories that always require:

- Systematic and extensive profiling used for significant decisions about individuals

- Large-scale processing of special category or criminal offence data

- Systematic monitoring of publicly accessible areas

Importantly, the scope of a DPIA is not limited to new systems. It applies to any significant change in how personal data is used. Expanding a customer analytics program, deploying a new HR platform, integrating a third-party data vendor, or adding AI-based decision-making to an existing workflow can each trigger the obligation independently.

Beyond regulatory compliance, a DPIA forces cross-functional teams to confront data flows that are often poorly understood. It identifies processing that lacks a clear legal basis and demands explicit decisions about which risks are acceptable before deployment.

Why Data Protection Impact Assessments Are Needed

GDPR did not invent the idea of assessing privacy risk before deploying a system. It made that assessment mandatory and placed the burden of proof on the organization. The GDPR Article 35 requirements codify what accountability looks like in practice: Not the intent to protect data, but evidence that you assessed the risk before the fact. That is the accountability framework regulators now enforce against.

Missing DPIAs Signal Systemic Failure to Regulators

When a regulator investigates a breach or a complaint, the first question is not what went wrong technically. It is whether the organization knew, or should have known, that the processing carried risk. A missing DPIA answers that question immediately.

The healthcare sector has been particularly serious with enforcement . The CMS GDPR Enforcement Tracker recorded an average fine of EUR 203,423 per violation in healthcare in 2024, up from EUR 17,500 the year before. In at least one case, a hospital paid EUR 200,000 after a ransomware attack where regulators found no DPIA had been carried out.

Organizations that can demonstrate a completed DPIA are treated differently by regulators, even where a breach occurred.

Privacy Risks Are Cheaper to Fix Early

The economics of privacy risk work the same way as the economics of any engineering defect. A vulnerability caught during design costs a fraction of what it costs after deployment. With privacy, the bill is higher because in this case, engineering rework comes with regulatory exposure attached.

DPIAs move risk identification to the earliest viable point in the process. They force a structured review before architecture decisions are locked, before vendor contracts are signed and data starts flowing. Teams that treat DPIAs as a late-stage compliance checkbox are not saving time, but deferring costs that will become significantly larger when the system is live.

Modern Data Environments Are Too Complex for Informal Reviews

Five years ago, a privacy review might involve one team, one system, and a defined dataset. That is no longer the architecture most enterprises operate in. Data now moves across cloud infrastructure, third-party APIs, SaaS vendors, analytics platforms, and AI pipelines, often in parallel, often without a single team holding complete visibility.

Informal reviews fail in this environment. They can only identify risks that are already understood. The risks that cause enforcement action are typically the ones that fall outside the reviewer's frame of reference: a vendor sub-processor that was not mapped, a data category that was not considered, a retention period that was never defined..

DPIAs Create the Audit Trail That Protects You

A completed DPIA creates a dated, documented record of what the organization knew, what it assessed, and what decisions it made before processing began. That record defines the scope of the organization's exposure if something subsequently goes wrong.

Conducting a DPIA without recording it properly offers almost no accountability protection. The record needs to be dated, version-controlled, and tied to a specific processing activity.

AI Systems Demand More Than a Standard Risk Review

AI systems introduce a category of risk that sits outside the standard DPIA frame entirely. A standard DPIA evaluates risks arising from personal data processing. AI changes that risk profile in ways a personal data lens alone cannot capture.

AI systems make decisions that affect individuals at scale. Those decisions can produce discriminatory outcomes, opaque logic, and results that cannot be traced back to a specific processing activity. These are systemic risks that sit outside what a standard DPIA methodology is designed to assess.

The EU AI Act, which came into force in 2024 (with its provisions taking effect in a phased manner through 2025-2027), introduces a separate compliance framework for high-risk AI systems.

Organizations deploying AI within that scope are dealing with two distinct obligations, different methodologies, triggers, and documentation requirements. Treating them as the same exercise creates gaps in both.

Regulatory note

AI systems now trigger two separate assessment obligations: a GDPR DPIA and an EU AI Act Fundamental Rights Impact Assessment (FRIA). These are not interchangeable and cannot be satisfied by a single document.

When Should Organizations Conduct a DPIA?

The timing requirement under GDPR Article 35 is explicit: a DPIA must be conducted prior to the processing. That means before a system is built, not after it is already running. Privacy teams should be involved early enough to influence architecture decisions, not brought in at the end to document a system that has already been configured.

The nine criteria published by the European Data Protection Board provide the clearest framework for determining when a DPIA is required. Meeting two or more of the following typically triggers the obligation:

- Evaluation or scoring of individuals, including profiling and prediction

- Automated decision-making with legal or similarly significant effects

- Systematic monitoring of individuals

- Processing of sensitive or highly personal data

- Large-scale data processing

- Matching or combining datasets from different sources

- Processing data about vulnerable individuals

- Innovative use of technology or novel organizational solutions

- Processing that prevents individuals from exercising rights or accessing services

The threshold is not whether processing is high risk in absolute terms. It is whether it is likely to result in high risk given the nature, scope, context, and purposes of the processing. When there is genuine uncertainty, the default position should be to conduct the assessment.

What Does a Data Protection Impact Assessment Include?

A DPIA has defined components, each serving a specific function in demonstrating accountability. Regulators review these documents against a standard, not against the organization's own judgment about what constitutes sufficient analysis. .

A Clear Map of What Data Is Processed and How

The first component is also the one that most DPIAs get wrong. Before assessing risk, an organization needs an accurate account of what personal data is processed, where it originates, how it flows through systems, which parties receive it, how long it is retained, and under what conditions it is deleted.

An inaccurate data map produces an inaccurate risk assessment. If the map misses a data category, the risk analysis for that category never happens. If it omits a vendor, the risks introduced by that vendor are invisible. The quality of every subsequent element of the DPIA depends entirely on the accuracy of this component.

Evidence That the Processing Is Necessary and Proportionate

GDPR requires that personal data processing be necessary for a specified purpose and limited to what is required for that purpose. A DPIA must demonstrate that the processing being assessed satisfies those requirements. The operative word is demonstrate.

Regulators do not accept assertions of necessity. They expect evidence: documented purpose limitation, a clear account of why the data collected is the minimum required, and an explanation of why the processing achieves the stated purpose in a way that a less privacy-invasive approach could not.

A Specific Analysis of Risks to Individuals

Risk assessment in a DPIA must be specific. Listing categories of potential harm is not sufficient. The assessment must evaluate both the likelihood that a given harm will materialize and the severity of that harm if it does.

The risks to assess include: unauthorized access to personal data; misuse of data beyond its stated purpose; re-identification of pseudonymized or anonymized data; excessive collection that exposes individuals to unanticipated harm; and, for AI systems, discriminatory outputs produced by automated processing. Likelihood and severity are not interchangeable variables. A low-likelihood risk with catastrophic consequences requires different treatment than a high-likelihood risk with minor consequences.

Safeguards and an Honest Assessment of Residual Risk

Identifying risk without addressing it produces an incomplete DPIA. Organizations must document the technical and organizational measures applied to reduce identified risks, and assess what risk remains after those measures are in place.

Technical safeguards include encryption, pseudonymization, access controls, data minimization at the point of collection, and monitoring for unauthorized access. Organizational measures include staff training, data governance policies, vendor management controls, and incident response procedures.

The residual risk assessment is where most DPIAs fail. After safeguards are documented, the DPIA must assess honestly whether the remaining risk is acceptable.

Must know

If residual risk remains high after safeguards are applied, Article 36 GDPR requires prior consultation with the competent supervisory authority before processing begins. This obligation is not discretionary.

Organizations that conclude residual risk is acceptable specifically to avoid triggering a DPA consultation are taking on the risk that a regulator reviewing the DPIA will reach a different conclusion. That is a worse position than the consultation itself.

Documentation That Supports Regulatory Review

Documentation requirements cover findings from each component, decisions made about safeguards, the rationale behind those decisions, residual risk conclusions, and the identity of individuals consulted during the assessment, including the DPO where one is appointed. This documentation must be maintained in a retrievable form and updated when processing activities change in ways that alter the risk profile.

How to Conduct a Data Protection Impact Assessment: Step-by-Step

Understanding what a DPIA must include is the foundation. Knowing how to execute one is what determines whether the assessment is defensible. The five steps below translate the requirements into a practical procedure privacy teams can follow. The five steps below work as a practical DPIA template that translates requirements into a repeatable workflow.

Step 1: Identify High-Risk Processing Activities

Determine whether the project or system under review meets the threshold for a required DPIA. Evaluate the type of data involved, the scale of processing, the populations affected, and the potential consequences for individuals if something goes wrong. Use the EDPB's nine-criteria framework as your screening standard. Document the outcome of that screening regardless of whether a full DPIA is ultimately required. A documented decision not to conduct a DPIA is itself an accountability record.

Step 2: Map Data Flows and Processing Activities

Trace exactly where personal data originates, how it moves through each system, which internal teams and external vendors have access to it, where it is stored, and how it is eventually deleted or returned. This step is only as reliable as your underlying data inventory. A map built from assumptions or stale system documentation will produce an inaccurate DPIA and leave real risks unassessed.

Step 3: Evaluate Privacy Risks

Risk analysis must be specific to this system, not a list of generic GDPR principles.

Analyze what could realistically go wrong for the individuals whose data is being processed. This includes unauthorized access, re-identification of pseudonymized data, misuse by third parties, function creep beyond the stated purpose, and, for AI systems, discriminatory outputs from automated decision-making. For each identified risk, assess both the likelihood of occurrence and the severity of harm, not just whether a theoretical risk exists.

Step 4: Define Mitigation Measures

Document the specific safeguard for each specific risk. Determine the technical and organizational controls that reduce each identified risk to an acceptable level. Encryption, access controls, pseudonymization, data minimization, retention limits, and vendor contractual obligations all belong here.

Be precise about what each measure addresses and what residual risk remains after it is applied. If residual risk remains high after all available safeguards are in place, Article 36 GDPR requires prior consultation with the relevant supervisory authority before processing begins.

Step 5: Document the DPIA and Review Outcomes

An undocumented assessment is indistinguishable from no assessment at all. The document is the evidence.

Record all findings, the reasoning behind each decision, the safeguards selected, and the residual risk conclusion in a format that can be produced and explained during a regulatory inquiry. Schedule a review trigger for any material change to the processing activity, and assign clear ownership for maintaining the document over time. A structured audit trail that lives in the same system as your data governance policy makes this defensible at scale.

Common Challenges Organizations Face When Conducting DPIAs

The most common failure point in DPIA practice is not methodology, but the data infrastructure..

- Incomplete data inventories: Teams frequently discover mid-assessment that they cannot answer basic questions about what data is being processed, where it originates, or which third parties have access to it. The assessment stalls, or it proceeds on assumptions that produce a result with no relationship to actual risk.

- Manual data mapping: Organizations that maintain data flow documentation in spreadsheets or hand-built diagrams are working from records that degrade quickly. Craik Pyke, VP of Infrastructure and Security Engineering at SurveyMonkey, describes the operational reality directly: "We had a lot of concerns about the full accuracy. If you ran it two different times, you may get slightly different results. That was very concerning from a legal responsiveness perspective." His colleague Mahesh Padmanabhan, Principal Data Privacy Architect, put the consequence in concrete terms: "We had multiple instances where that Excel file was in an inconsistent state and we lost information."

What those failures cost SurveyMonkey–managing four petabytes of data across 40 million users–was not just accuracy. It was legal defensibility at scale. How SurveyMonkey automated privacy governance across that infrastructure is a useful reference for organizations facing the same constraint.

- Cross-functional coordination: A DPIA requires input from engineering, legal, security, and product teams. Without clear ownership and a defined process for gathering that input, assessments either stall at the scoping stage or are completed by a single function with an incomplete picture of the actual processing activity.

- Keeping assessments current: Systems evolve, vendors change, and data uses expand. A DPIA completed at launch and never reviewed again will not reflect what the system is actually doing twelve months later.

Finally, many teams conflate documenting the assessment with completing it. A DPIA that records the questions asked but not the reasoning behind the answers does not meet the accountability standard. Regulators reviewing a DPIA in the context of an investigation expect to see the logic, not just the conclusion.

These challenges compound for organizations deploying AI. The same infrastructure gaps that make DPIAs unreliable also make the EU AI Act's additional assessment obligations harder to satisfy.

When a DPIA Alone Is No Longer Sufficient

A DPIA assesses risks to the rights and freedoms of natural persons arising from personal data processing. That scope was designed around a specific regulatory problem, and for most traditional processing activities, it is sufficient.

AI changes the boundary of that problem. An AI system that uses personal data to make consequential decisions creates two distinct categories of risk. The first is the personal data risk a DPIA addresses directly: how data is collected, processed, stored, and accessed. The second is the fundamental rights risk created by the system's outputs, including discrimination, exclusion, or material harm produced by algorithmic decision-making that cannot be traced to a single data processing act.

These are different risks. They require different frameworks. A DPIA written to satisfy Article 35 cannot double as an EU AI Act Fundamental Rights Impact Assessment, because the questions each document must answer are not the same.

Organizations deploying AI in regulated contexts need to run both assessments as separate exercises with separate documentation. A DPIA for an AI-driven credit decisioning system covers how personal data is collected, whether it is processed lawfully, and what data security risks exist. The FRIA for the same system covers whether the model produces discriminatory outputs, what recourse exists for affected individuals, and how the system affects access to financial services at a population level. Neither document answers the questions the other is designed to address.

As Michael Razeeq, Privacy Counsel at Ramp, puts it: "Laws like the EU AI Act, the Colorado AI Act, and most of the privacy laws require some level of data mapping. And so, I think as you move into some of the AI laws and their requirements, that will only become more important." Organizations that treat these as a single exercise will find gaps in both: the DPIA will not capture algorithmic rights risks, and the FRIA will not satisfy Article 35.

How Ethyca Closes the Gap Between DPIA Requirements and Operational Reality

A DPIA is only as accurate as the data infrastructure behind it. A process that is structurally sound can still produce an inaccurate risk assessment if the data inventory it draws from is stale, incomplete, or out of sync with the systems it describes.

Ethyca builds the infrastructure layer that makes DPIA inputs accurate rather than aspirational, embedded directly into data pipelines rather than bolted on as a compliance overlay. Trusted by 200+ global brands and processing 744M+ preferences annually, the platform consists of five products that each address a specific component of a defensible DPIA: Helios (Data inventory and classification), Janus (Consent enforcement), Lethe (Rights and retention), Fides (Audit trail and records), Astralis (AI governance).

DPIAs are built on data infrastructure. Build the infrastructure that makes them defensible.

.png?rect=534,0,2133,2133&w=320&h=320&fit=min&auto=format)

.png?rect=0,3,4800,3195&w=320&h=213&auto=format)

.png?rect=0,3,4800,3195&w=320&h=213&auto=format)