Beyond the Hype: Building a Verifiable AI-Native SDLC

Discover how Ethyca doubled engineering output by replacing guesswork with Knowledge Graphs and formal verification in an AI-native SDLC.

In the last year, the conversation around AI in software engineering has shifted. We’ve moved past the initial excitement of autocompleted code snippets and into a more challenging phase: how do we integrate AI into the Software Development Life Cycle (SDLC) in a way that is actually verifiable, scalable, and safe?

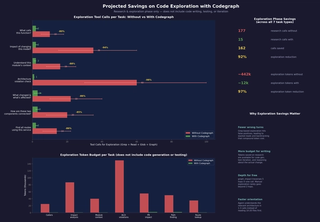

At Ethyca, we aren’t just observing this shift; we’re documenting its impact through data. By moving toward an agentic SDLC, we increased our engineering output by nearly 100% between Q3 and Q4 of last year, and we’ve sustained an additional 25% growth so far in Q1 of 2026.

However, velocity without verification is just a faster path to technical debt. To maintain this momentum, we’ve had to rethink the "wiring" of our development process, moving from AI as a simple suggestion engine to AI as a verifiable system.

The Problem: When AI Agents Get "Lost"

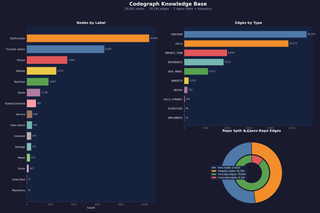

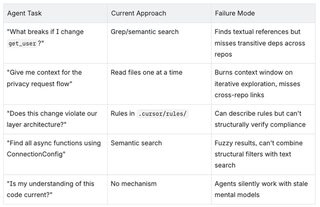

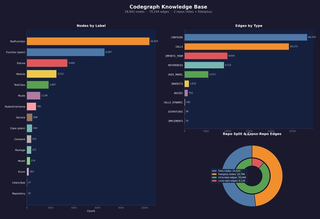

The primary bottleneck in any high-velocity AI workflow is the Context Gap. Even with 1M+ token context windows, AI agents get lost in large, complex codebases. Our specific stack spans two repositories with roughly 1,500 Python files: fides (OSS) and fidesplus (our enterprise extension). Fidesplus imports from fides across ~475 files, overrides core routes, and extends models via inheritance.

Traditional RAG (Retrieval-Augmented Generation) treats these repositories like a pile of text files. It’s effective for "find a similar snippet," but it is notoriously terrible at "understand how this change affects the upstream API." This cross-repo coupling is invisible to text-based tools. When you’re pushing for the kind of throughput we’ve achieved, relying on an agent to "guess" the impact of a change across 1,500 files is a recipe for regression.

The Work: Knowledge Graphs and Formal Logic

To solve this, I’ve focused on building a two-pillar system designed for systemic autonomy: Knowledge Graph Ontologies and Formal Verification.

We use specialized parsers to treat our codebase not as text, but as a living topology of connected entities. The shift here isn't just about speed; it's about authoritative accuracy. In a traditional workflow, answering a structural question—like whether a specific database connector inherits its namespace support without overrides—usually requires a dozen grep calls followed by manual file reads. You’re essentially hunting for things that aren't there.

With the Knowledge Graph, I can replace fifteen tool calls with a single query. During a recent planning session, the graph instantly confirmed that a connector had zero overrides in its inheritance path. Because the graph provided an authoritative "no" on overrides, I didn’t have to waste time manually verifying files across two repos. I could narrow the scope of the change with 100% confidence, cutting out the "detective work" that usually slows down a sprint.

But context alone isn't enough; we also need mathematical certainty. This is where TLA+ (Temporal Logic of Actions) comes in. We use it to "blueprint" our most complex logic before we write a single line of code.

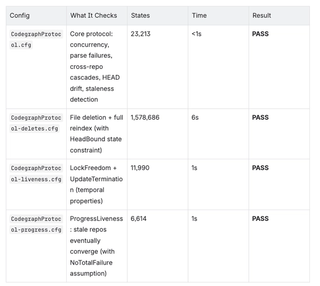

I recently used TLA+ to model our Knowledge Graph logic itself. The model checker was able to identify and mathematically test 1.6 million possible states. In the process, it located two critical bugs that would have been nearly impossible to find during a standard manual review. We were able to update the models and the plan before a single line of production code was written.

Results: Finding the "Hidden" Ripple Effects

The real value of this approach shows up when you stop searching for strings and start searching for meaning.

In a recent pass, the graph surfaced a mismatch in how table names were being quoted across a specialized connector. A standard text search for the utility method would have found the call sites, but it wouldn't have revealed that the method sat in the critical error-handling path for both "retrieve" and "mask" operations.

By seeing the "neighborhood" of the code in the graph, we caught a bug that would have otherwise made it into the PR and required a massive rework. The graph didn't just tell me what code to change; it told me which code I needed to read to understand the blast radius across both repositories.

Why This Matters

This shift excites me because it evolves the role of the software engineer. We are moving away from being producers of code and toward being architects of policy.

At Ethyca, where privacy and data sovereignty are the core product, we cannot rely on "black box" automation. We need systems that are explainable and verifiable. By building these loops, we ensure that as our velocity scales, our safety and compliance scale with it.

The Road Ahead

We are currently on the frontier of AI-driven delivery, but the next step is Systemic Autonomy. We are working toward a future where our Knowledge Graph and verification layers allow our systems to self-heal and optimize while maintaining the rigorous engineering standards Ethyca is known for.

The barrier between a technical requirement and a verified implementation is thinner than ever. Our job is to ensure that as we move faster, we build on a foundation of proof, not just probability.

At ethyca we see privacy management is an engineering discipline. Speak with Ethyca’s team to learn how to design a privacy engine from the bottom up.

.png?rect=0,3,4800,3195&w=320&h=213&auto=format)

.png?rect=0,3,4800,3195&w=320&h=213&auto=format)