Scanning for privacy gaps is only half the job.

.jpg?rect=0,405,3871,4996&w=320&h=413&fit=min&auto=format)

Visibility into code is where enforcement starts, not where it ends.

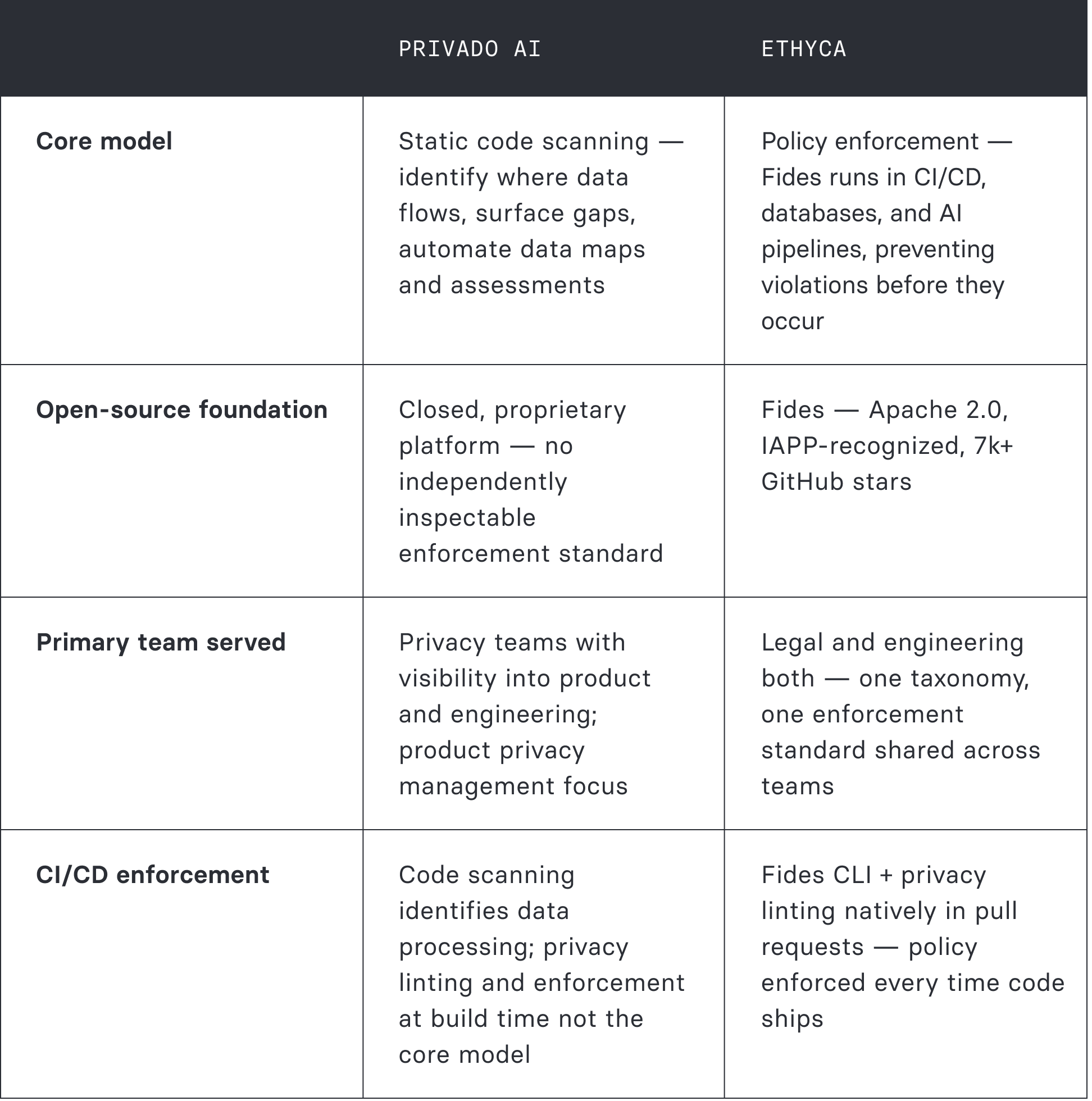

Privado AI and Ethyca share a conviction that privacy belongs inside engineering workflows — not just in compliance dashboards. The difference is what happens after the scan: documentation and mapping, or enforcement at the build and system level.

Privado AI

Static code scanning for privacy gap discovery and automated data mappingPrivado AI scans code repositories to build automated data maps — identifying where personal data is processed across web, app, backend systems, and third parties, without manual questionnaires. Privacy teams get visibility into the product data footprint and can use AI agents to automate assessments, PIAs, DPIAs, and RoPA documentation.

For privacy teams trying to understand what engineering is actually building with personal data, that visibility is meaningful. Privado AI's "product privacy management" framing addresses a real gap: the disconnect between what privacy policies say and what code actually does.

→ Strong for privacy team visibility into product data flows

Ethyca

A map of what's happening isn't the same as control over what canScanning code tells you where data flows today. It doesn't prevent new code from creating new gaps tomorrow. Without CI/CD-native enforcement, every code change is a new opportunity for policy drift — visible in the next scan, but not stopped at the build.

Fides is built on a different premise: policy as executable code, enforced at every stage. Privacy linting catches violations before PRs merge. Lethe runs against actual databases for DSR fulfillment. Astralis enforces consent in AI pipelines. The Fides taxonomy gives legal and engineering a shared standard — not just a visibility layer for privacy teams to interpret. Privado AI shows the map. Ethyca runs the rules.

→ Enforcement runs even when the privacy team isn't watching

.png?rect=1,0,1942,1960&w=320&h=323&auto=format)

Companies building trust into data with Ethyca

Where code scanning reaches its boundary.

Static analysis reveals what's already there. Enforcement determines what can exist going forward. For a privacy program that needs to prove policy ran — not just that it was mapped — that distinction matters.

Scanning is a snapshot, not a guardrail

Privado AI scans static code to identify data flows and build maps. That snapshot is accurate as of the scan. But code changes continuously — new features, new integrations, new third-party SDKs. Without enforcement at build time, the map is always catching up to reality. Fides linting in CI/CD means the policy runs before the code ships, not after it's in production.

No open standard shared with engineering

Privado AI gives privacy teams visibility into what engineering is building. It doesn't give engineering a tool to enforce privacy in what they build. There is no equivalent to the Fides CLI — the open standard that lets engineers implement policy in code, validated at the PR level. Privacy visibility and engineering enforcement are different capabilities; Privado AI addresses one.

AI governance is assessment-focused

Privado AI's AI governance capabilities center on privacy assessment for AI systems — conducting PIAs, documenting risks, identifying data flows into AI. That's documentation. Ethyca's Astralis enforces which consented data can enter AI training and inference pipelines — preventive controls at the pipeline level, in compliance with the EU AI Act, not an assessment of what went in after the fact.

The pattern underneath all three

Privado AI solves a real problem: privacy teams gaining visibility into product data flows without depending on engineers to fill out questionnaires. That's a meaningful capability at a stage in a privacy program's maturity. The limit is that visibility doesn't enforce. A regulator asking whether a privacy policy was applied to a specific record on a specific date needs more than a data map — it needs proof that the policy ran. Fides is built to provide that proof, at every layer of the stack.

Privado AI is proprietary. Ethyca's foundation is open.

Privado AI is a closed platform. Fides is the world's most widely used open-source privacy engineering standard — inspectable, contributable, and deployable independently of Ethyca's commercial platform. The difference matters when you need to prove enforcement, not just show a vendor dashboard.

7k+ GitHub stars

Actively maintained, community-contributed, and deployable independently of Ethyca's commercial platform. Every enforcement decision can be inspected by your team.

Apache 2.0

Open license No vendor lock-in on the taxonomy itself. Your privacy standard is yours — built on an open specification that DataGrail cannot match with a closed product.

IAPP

Recognized standard Fides is recognized by the International Association of Privacy Professionals as a governance standard — not a vendor tool, but a shared language the entire industry can use.

.png?w=320&h=180&auto=format)

"By adopting Ethyca's infrastructure, we're unifying privacy, legal, and engineering around a single source of truth, enabling us to manage data responsibly and confidently as we expand globally." ”— Director of CRM & Lifecycle Marketing · JustPark

Privado AI vs. Ethyca — side by side

Across the dimensions that determine whether your privacy program produces maps or enforces policy.

Organizations that need privacy enforced inside their stack.

Data-intensive enterprises where visibility isn't sufficient — where policy needs to run inside the systems, the pipelines, and the AI models that process the data.

From code maps to enforcement infrastructure.

Teams moving from Privado AI have typically built strong data flow visibility into their product stack. The transition is additive — that visibility is enriched with system-level enforcement, an open taxonomy engineering can adopt, and AI pipeline governance that goes beyond assessment.

↳ Step 1 — Enrich your Privado data maps with Helios

Data flow maps built from Privado's code scanning migrate as foundation. Helios adds real-time discovery with direct system hooks — moving from a code-derived view to an enforcement-ready inventory across internal databases and cloud infrastructure.

↳ Step 2 — Translate privacy obligations into Fides

Privacy requirements identified through Privado translate to the Fides taxonomy — the open standard both legal and engineering teams share. Legal obligations become machine-readable rules engineering can implement and validate at the PR level.

↳ Step 3 — Shift from scanning to build-time enforcement

Fides CLI and privacy linting in pull requests means policy violations are caught before code ships — not discovered in the next scan. Engineers become active participants in enforcement, not subjects of a privacy team's analysis.

↳ Step 4 — Extend to DSR system-level fulfillment

Lethe runs DSR requests against actual databases — erasure and access at the system level. The privacy program moves from documenting rights to executing them.

↳ Step 5 — Add AI pipeline enforcement with Astralis

Astralis enforces which consented data enters AI training and inference — preventive controls at the pipeline level. Moving from Privado's AI assessment approach to enforcement-level governance for EU AI Act compliance.

Typical deployment. Large enterprises live across 90+ websites within a month, with forward-deployed engineering support.

Policy runs at build time and query time. Violations prevented, not discovered after the scan.

Apache 2.0 foundation. The shared standard legal and engineering both own. Not a proprietary scanning tool.

Pricing with support included. No MAU variables at renewal. No SKU add-ons required to reach full capability.

Common questions

Questions that surface when privacy teams start asking whether visibility into data flows is sufficient or whether enforcement is needed.

Removing the dependency on engineering questionnaires is a real operational improvement — Privado AI solves a genuine pain point. The follow-on question is what the privacy team does with the map. If the goal is understanding the data footprint and producing documentation, code-derived mapping does that well. If the goal is ensuring that every system built from here forward enforces the privacy policy — that new code can't create new gaps without being caught — that requires enforcement tooling in the engineering workflow itself. Fides linting in CI/CD is that tooling. It doesn't replace visibility; it adds the enforcement layer that visibility alone can't provide.

Privado AI's community work through Bridge Summit is genuine — bringing privacy engineers together to discuss technical challenges is a real contribution. Ethyca's credibility comes from deployment: The New York Times, Ramp, Vercel, WeTransfer, SurveyMonkey — organizations with demanding compliance environments and zero tolerance for gaps. Fides has 7,000+ GitHub stars and is IAPP-recognized as a governance standard. The comparison isn't about who produces better conference content; it's about what the respective tools actually enforce in production.

The distinction is at the enforcement layer. Both platforms share the view that privacy belongs in engineering workflows, not just compliance dashboards. Privado AI's approach is to give privacy teams visibility into what engineering is building — through code scanning that produces data maps without requiring engineers to participate actively. Ethyca's approach is to give engineering a tool to enforce policy themselves — Fides CLI, linting in PRs, policy validation at build time. One is privacy team visibility into engineering. The other is engineering participation in privacy. For organizations where the goal is that privacy policy runs automatically regardless of which engineer wrote the code, the distinction is material.

Ask both: "When an engineer ships new code that processes personal data in a new way, how does your platform ensure it complies with our privacy policy before it reaches production?" Privado AI's answer involves the next scan cycle identifying the new data flow and surfacing it for privacy team review. Ethyca's answer: Fides linting in CI/CD catches the violation at the PR level — the code can't ship until the policy is satisfied. That question reveals whether you have a monitoring program or an enforcement program.